|

12/4/2023 0 Comments Crossentropy loss

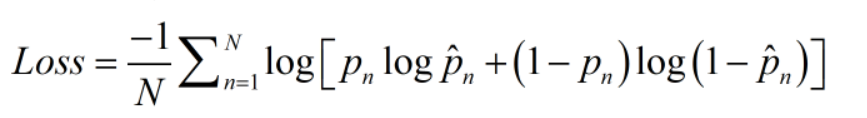

Likelihood function to be maximized for Logistic Regression Here is what the likelihood function looks like: Fig 2. The above cost function can be derived from the original likelihood function which is aimed to be maximized when training a logistic regression model. Here is what the function looks like: Fig 1. Multinomial Logistic Regression and Maximum Entropy ClassifierĬross-entropy loss or log loss function is used as a cost function for logistic regression models or models with softmax output (multinomial logistic regression or neural network) in order to estimate the parameters.Logistic Regression and Softmax Regression.Neural networks, specifically in the output layer to calculate the difference between the predicted probability and the true label during training.Read greater details in one of my related posts – Softmax regression explained with Python example.Ĭross-entropy loss is commonly used in machine learning algorithms such as: Recall that the softmax function is a generalization of logistic regression to multiple dimensions and is used in multinomial logistic regression. Cross-entropy loss is commonly used as the loss function for the models which has softmax output. In case, the predicted probability of the class is near to the class label (0 or 1), the cross-entropy loss will be less. In case, the predicted probability of class is way different than the actual class label (0 or 1), the value of cross-entropy loss is high. The cross-entropy loss function is an optimization function that is used for training classification models which classify the data by predicting the probability (value between 0 and 1) of whether the data belong to one class or another. The cross-entropy loss is used as the optimization objective during training to adjust the model’s parameters. It is commonly used in supervised learning problems with multiple classes, such as in a neural network with softmax activation. The function measures the difference between the predicted probability distribution and the true distribution of the target variables. How does cross-entropy loss help to prevent overfitting?Ĭross-entropy loss, also known as negative log likelihood loss, is a commonly used loss function in machine learning for classification problems. What is the relationship between cross-entropy loss and log-likelihood? How does cross-entropy loss compare to mean squared error loss in terms of performance? What type of problem is cross-entropy loss best suited for? What is the purpose of cross-entropy loss in machine learning? Cross-entropy Loss Explained with Python Example.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed